AI is steadily redefining healthcare by advancing diagnostics, strengthening clinical decision-making, and streamlining operations. As its adoption accelerates, regulators across the U.S., EU, India, and the Middle East are introducing stricter frameworks to ensure patient safety, safeguard data, and promote responsible, ethical use.

Today, AI compliance in healthcare is built on core elements like proven system performance, strong data management, algorithm transparency, and ongoing human oversight. While each region has its own regulatory approach, they all emphasize risk-based frameworks, continuous evaluation, and accountability.

At the same time, organizations need to manage key risks including algorithm bias, lack of transparency, data privacy issues, performance degradation over time, and overreliance on automation. A clear and structured approach to governance, compliance, and ethical AI development is essential for healthcare providers, pharmaceutical companies, and startups.

AI in Healthcare Compliance: How to Identify and Manage Risk

As AI becomes a core part of healthcare systems, the focus is shifting from adoption to accountability. Understanding potential risks and navigating evolving regulations is key to ensuring safe, reliable, and compliant AI-driven care.

The Growing Role of AI in Healthcare

Artificial Intelligence is rapidly reshaping healthcare, powering everything from early diagnosis and clinical decision support to predictive analytics and operational efficiency. As adoption accelerates, regulatory bodies across the globe are stepping in to ensure that innovation does not come at the cost of patient safety, data integrity, or ethical responsibility.

Between 2024 and 2025, major regulatory developments across the United States, European Union, India, and the Middle East have begun redefining how AI systems are designed, validated, and deployed in healthcare environments.

Regulatory Pressures

With AI playing a bigger role in care delivery, regulators are raising expectations around transparency, risk management, and compliance. Organizations are now expected to clearly demonstrate how AI systems are developed, validated, and monitored throughout their lifecycle, ensuring they remain safe, reliable, and aligned with evolving regulatory standards.

A Shift Toward Structured AI Governance

Healthcare regulators are no longer viewing AI as experimental technology. Instead, it is now treated as a critical component of care delivery that requires strict oversight, transparency, and lifecycle management.

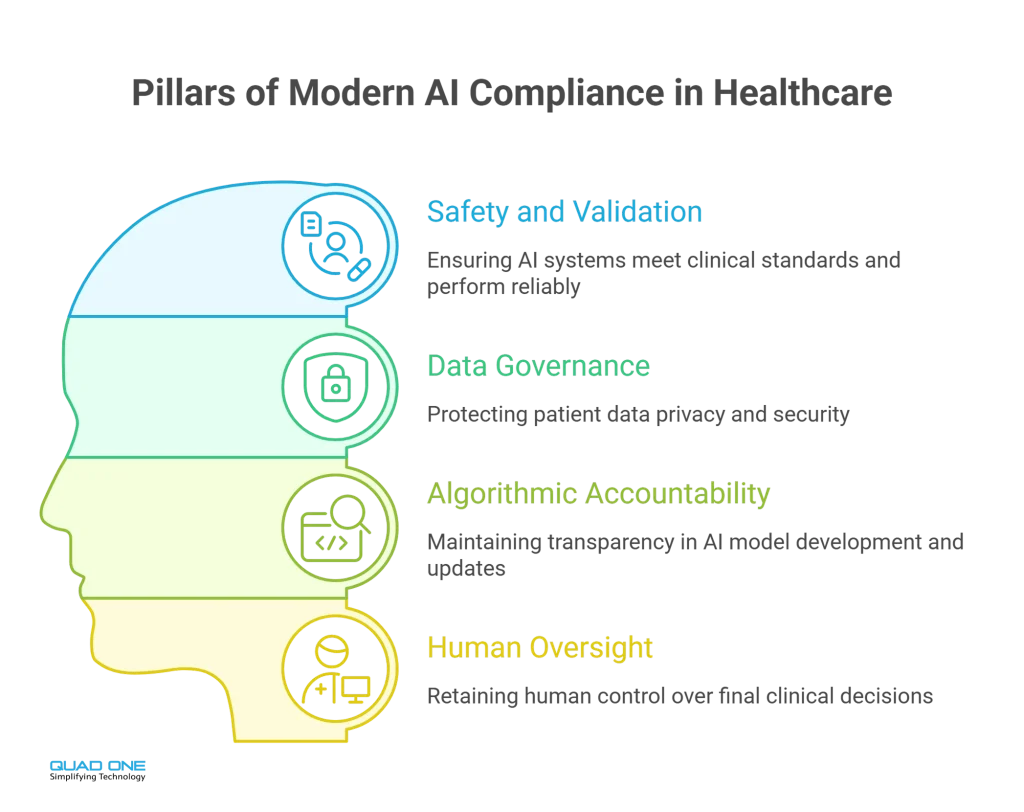

Modern AI compliance in healthcare is built on four core pillars:

- Safety and validation: AI systems must meet clinical standards and demonstrate reliable performance.

- Data governance: Strong data privacy, security, and compliance with evolving protection laws are essential.

- Algorithmic accountability: Developers must ensure transparency in how models are trained, tested, and updated.

- Human oversight: Final clinical decisions must always remain under human control.

These principles are now central to procurement, product development, and risk management strategies across healthcare organizations.

United States: Evolving AI Oversight

In the U.S., regulatory efforts are led by evolving frameworks for AI-enabled medical devices. New approaches focus on managing adaptive algorithms that continue learning after deployment.

Recent developments include:

- Predefined update frameworks that allow AI systems to evolve without repeated approvals

- Lifecycle-based monitoring covering design, validation, and real-world performance

- Increased focus on transparency in AI-driven clinical tools

Although there is no single AI-specific law, compliance with data privacy regulations remains critical. Authorities now emphasize that AI systems including third-party tools must follow strict data protection, de-identification, and transparency standards.

At the same time, individual states are introducing laws around bias prevention, explainability, and patient consent, creating a layered regulatory landscape.

European Union: Risk-Based Regulation

The European Union has taken a structured, risk-based approach by classifying most healthcare AI systems as “high-risk.” This means stricter requirements for both developers and healthcare providers.

Key expectations include:

- Robust risk management and quality systems

- Detailed documentation of model performance

- Built-in human oversight to prevent over-reliance on automation

- Continuous monitoring and incident reporting

In parallel, new frameworks for health data sharing are enabling secure access to large datasets for AI training, while maintaining strict privacy safeguards. This balance supports innovation without compromising patient rights.

India: Strengthening Ethical and Legal Foundations

India is building a strong regulatory base focused on privacy, ethics, and accountability.

Recent developments include:

- Data protection laws enforcing consent-driven data usage and stricter storage controls

- Ethical AI guidelines promoting fairness, transparency, and human involvement

- Draft regulations for AI-based medical software, introducing risk classifications and validation requirements

These measures are pushing healthcare providers and startups to adopt secure, compliant, and ethically sound AI practices.

Middle East: Innovation with Data Control

Countries in the Middle East are advancing AI adoption while maintaining strict control over sensitive health data.

Key trends include:

- Regulatory frameworks emphasizing clinical validation and transparency

- Mandatory reporting and monitoring of AI system performance

- Strong data localization and protection requirements

This approach ensures patient trust while supporting rapid digital transformation in healthcare.

Identifying AI Risks in Healthcare

As AI becomes deeply embedded in healthcare systems, identifying and managing risks is critical. Common risk areas include:

- Bias in algorithms: Poor data diversity can lead to unequal outcomes

- Lack of transparency: Black-box models can reduce clinical trust

- Data privacy risks: Mishandling sensitive health data can lead to compliance violations

- Performance drift: AI systems may become less accurate over time without monitoring

- Overdependence on automation: Reduced human oversight can impact clinical judgment

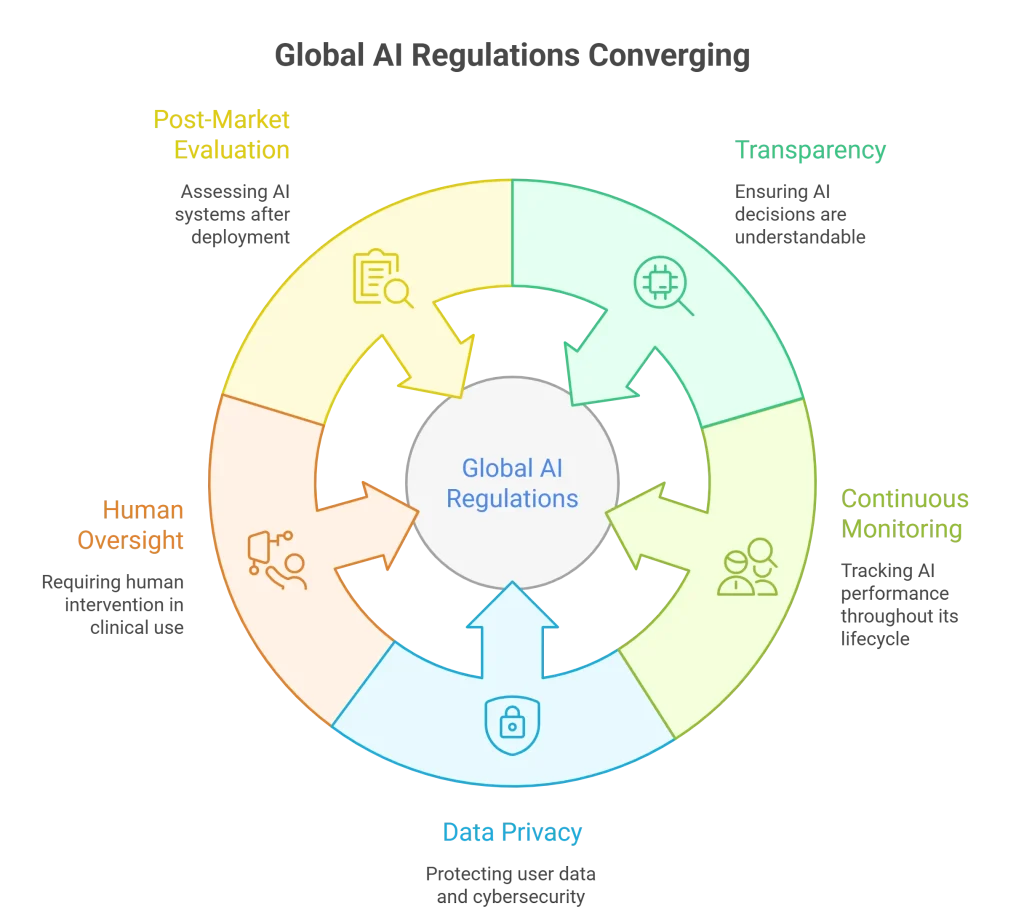

Global Alignment on Core Principles

Despite regional differences, global regulations are converging around shared priorities:

- Transparency and explainability in AI decisions-AI systems should provide clear and understandable insights into how decisions are made, helping build trust and support better clinical judgment.

- Continuous monitoring throughout the AI lifecycle-AI models need regular monitoring and updates to maintain accuracy, adapt to new data, and reduce risks of errors or bias.

- Strong data privacy and cybersecurity practices-Protecting patient data is essential, requiring strong security measures like encryption and secure access controls to prevent breaches.

- Mandatory human oversight in clinical use-AI should support, not replace, healthcare professionals, ensuring all decisions are reviewed and validated by humans.

- Ongoing post-market evaluation of AI systems– AI should support, not replace, healthcare professionals, ensuring all decisions are reviewed and validated by humans.

Strategic Approach to Managing AI Risk

Managing AI risk in healthcare calls for a proactive and well-defined approach. It involves spotting potential risks early, maintaining compliance throughout the lifecycle, and continuously monitoring systems for accuracy, safety, and ethical use. With the right strategy in place, organizations can build trust, minimize uncertainty, and scale AI solutions with confidence.

For Healthcare Providers

- Internal governance: Establish committees and policies to evaluate AI tools, monitor performance, and ensure ethical, safe use.

- Regulatory compliance: Follow local and global standards for data privacy, clinical safety, and algorithm accountability.

- Clinician training: Educate healthcare professionals to interpret AI outputs correctly and use them as decision-support, not a replacement.

For Medical Device and Pharma Companies

- Embed compliance into product design and development

- Maintain detailed documentation for audits and validation

- Use diverse datasets to reduce bias and improve accuracy

For medical device and pharma companies, it’s important to build compliance into AI tools right from the start, making sure they meet all regulatory and ethical standards. Keeping thorough records of design, data, and performance makes audits and validation easier. Using diverse, representative datasets also helps ensure the AI is accurate, fair, and works well for all types of patients.

For Startups and Innovators

- Follow privacy-first design principles

- Build transparent and ethical AI systems

- Collaborate with regulators and healthcare providers for compliant innovation

From Compliance to Competitive Advantage

The wave of AI regulation is not slowing innovation, it is shaping it responsibly. By focusing on transparency, fairness, and patient protection, regulators are building a framework for sustainable growth.

For healthcare organizations, compliance is no longer optional. It directly impacts trust, scalability, and market access. Those who proactively align with evolving regulations will be better positioned to unlock AI’s full potential while ensuring safe, ethical, and effective patient care.

Conclusion

AI regulation in healthcare is not a barrier but a foundation for responsible innovation. As global standards continue to evolve, organizations that prioritize compliance, transparency, and ethical practices will gain a competitive edge.

By embedding risk management and governance into AI strategies, healthcare stakeholders can build trust, ensure patient safety, and unlock the full potential of AI creating a future where technology and care work seamlessly together.