Generative AI in healthcare is no longer just hype. In 2026, it is a $3.3 billion-plus reality transforming drug discovery, diagnostic accuracy, and clinical workflows. While “autonomous AI doctors” remain a future concept, the current reality is a human-in-the-loop model where AI augments physician decision-making, reduces diagnostic errors by up to 85%, and cuts drug development timelines by years. According to Accenture, AI applications have the potential to save the US healthcare system more than $150 billion annually.

This guide maps the generative AI landscape in healthcare as it stands in 2026: what is working, what is not, where the market is heading, and how healthcare leaders should prepare. It covers diagnostics, drug discovery, clinical documentation, patient engagement, ethics, and implementation strategy.

| 2026 Snapshot : Generative AI in healthcare is a $3.3B+ market in 2026, projected to exceed $22B by 2032. Over 70% of FDA-cleared AI tools focus on medical imaging. Ambient AI documentation tools (like Nuance DAX Copilot) are deployed across major health systems. Drug discovery timelines are compressing from 10+ years to 3–5 years for AI-assisted candidates. The dominant adoption model is human-in-the-loop: AI recommends, clinicians decide. |

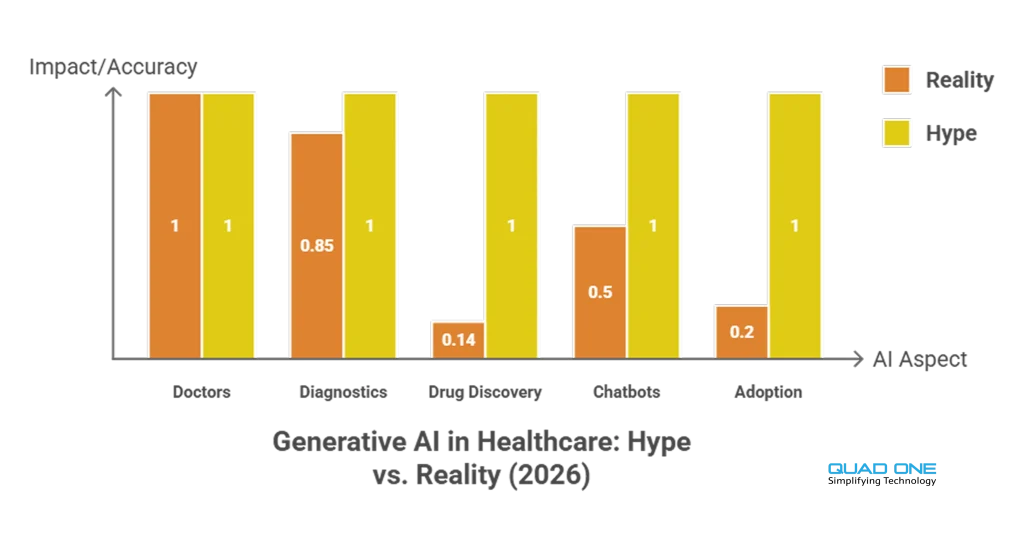

Generative AI in Healthcare: Hype vs. Reality

The conversation around AI in healthcare often oscillates between utopian promises and sceptical dismissal. Here is where things actually stand in 2026:

Hype: AI will replace doctors. Reality: AI is a decision-support tool. The human-in-the-loop model dominates every clinical deployment. AI flags anomalies, suggests differentials, and drafts documentation. Clinicians verify, contextualise, and decide. No regulatory body has approved fully autonomous clinical AI for diagnosis or treatment.

Hype: AI diagnostics are perfect. Reality: AI diagnostic tools are highly accurate in controlled settings (breast cancer detection, diabetic retinopathy screening, acute kidney injury prediction). But accuracy drops when models encounter edge cases, rare diseases, or patient populations not represented in training data. Bias in training data is a known, partially addressed risk.

Hype: AI will solve the drug discovery crisis overnight. Reality: AI is compressing drug candidate identification from years to weeks. Atomwise, Insilico Medicine, and others have AI-identified candidates in clinical trials. But regulatory approval, safety testing, and manufacturing timelines remain. AI accelerates the front end, not the full pipeline.

Hype: Generative AI chatbots can provide medical advice. Reality: LLMs like GPT-4 and Med-PaLM 2 perform well on medical Q&A benchmarks but still hallucinate, struggle with drug-interaction queries, and lack real-time patient context. They are effective for patient education, administrative tasks, and preliminary triage when paired with clinical guardrails. They are not licensed to practice medicine.

What Is the Difference Between Predictive AI and Generative AI in Medicine?

These two terms are often conflated, but they serve fundamentally different functions in healthcare.

Predictive AI analyses historical data to forecast outcomes. In healthcare, it powers risk stratification (which patients are likely to be readmitted), early warning systems (acute kidney injury prediction 48 hours before onset), and resource demand forecasting (ICU bed utilisation). It answers the question: “What is likely to happen next?”

Generative AI creates new content: text, images, molecular structures, synthetic data. In healthcare, it powers clinical note generation (ambient documentation from doctor-patient conversations), drug candidate design (generating novel molecular structures), synthetic medical imaging for training AI models, and patient-facing content (educational materials, chat responses). It answers the question: “What should be created?”

In practice, the most effective healthcare AI systems combine both. A predictive model identifies a high-risk patient; a generative model drafts the personalised outreach message. A predictive model flags a suspicious radiology finding; a generative model produces the structured report. The distinction matters for procurement, regulation, and risk assessment, because generative outputs need additional validation (hallucination risk) that predictive outputs typically do not.

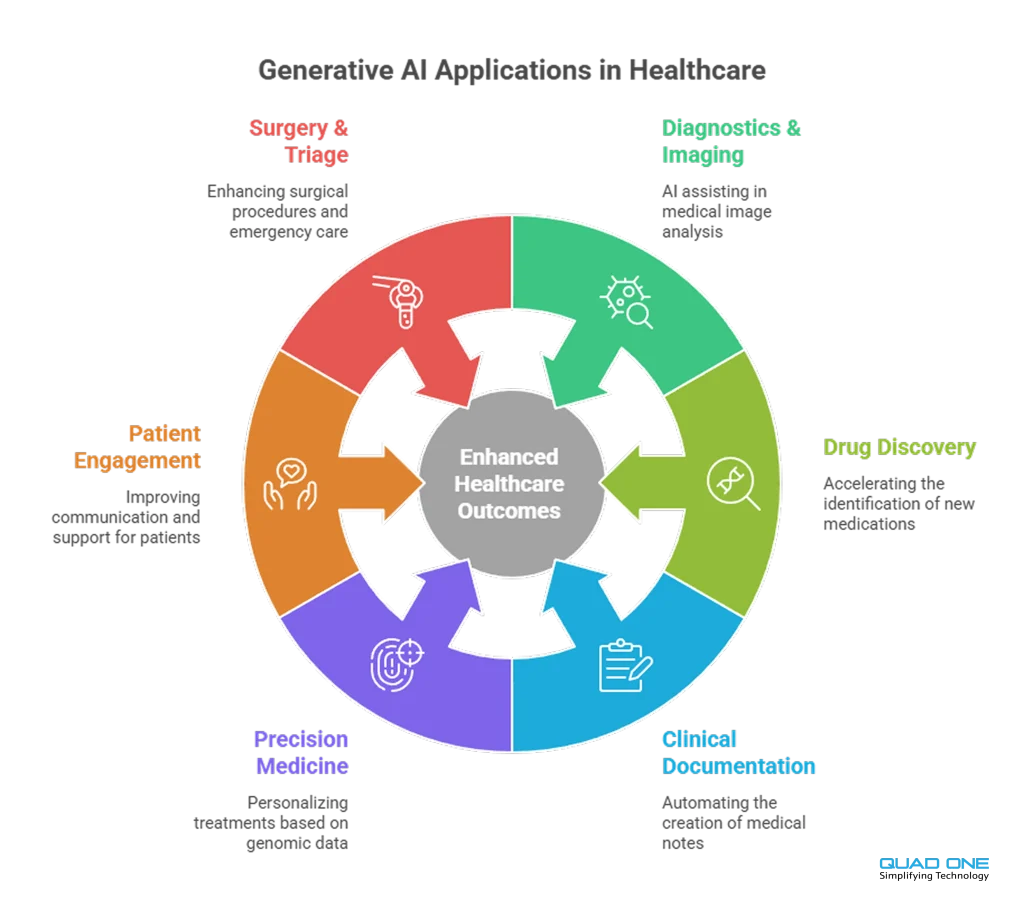

Key Applications of Generative AI in Healthcare (2026)

1. AI-Powered Diagnostics and Medical Imaging

Over 70% of FDA-cleared AI tools focus on medical imaging. Deep learning algorithms analyse X-rays, CT scans, MRIs, and pathology slides to detect anomalies that may be missed by human review. AI algorithms have outperformed human radiologists in detecting breast cancer from mammograms. Google’s DeepMind has developed algorithms that predict acute kidney injury up to 48 hours before it occurs. In 2026, AI-powered imaging is standard in radiology departments at major health systems, operating as a “second reader” that flags findings for clinician review.

2. Drug Discovery and Development

AI accelerates drug development by predicting potential drug candidates and optimising clinical trials. AI algorithms simulate drug interactions with biological targets at a scale and speed impossible for human researchers. Atomwise uses AI to predict molecular behaviour, accelerating identification of potential candidates for diseases like Ebola and multiple sclerosis. Insilico Medicine brought an AI-discovered drug candidate to Phase II clinical trials in a fraction of the traditional timeline. AI also enables drug repurposing, uncovering new therapeutic applications for existing compounds.

3. Clinical Documentation Automation

Physician burnout is a systemic crisis. Clinicians spend a significant portion of their time on documentation rather than patient care. Ambient AI tools like Nuance’s DAX Copilot record and summarise doctor-patient conversations, auto-generating clinical notes and reducing charting time. These tools integrate with EHR platforms (notably Epic) and are deployed across major US health systems. The result: clinicians reclaim hours per week, documentation accuracy improves, and patient face-time increases.

4. Personalised Treatment and Precision Medicine

AI models evaluate patient genetics, treatment responses, and lifestyle factors to recommend individualised therapies. In oncology, AI analyses tumour genomics to suggest targeted therapies that maximise efficacy and minimise side effects. Personalised AI-driven treatment plans improve adherence and outcomes by matching interventions to individual patient profiles rather than population averages.

5. Patient Engagement and Communication

AI-powered chatbots, virtual assistants, and automated follow-ups provide 24/7 support and personalised care. Natural language processing enables communication in multiple languages, increasing accessibility across diverse populations. AI-based mobile applications provide customised health recommendations, medication reminders, and instant alerts.

Quad One’s AI WhatsApp Bot is one example: patients interact in their own language for scheduling, reports, and support, all within WhatsApp, with no app download required.

For hospital-wide engagement orchestration, Quad One’s AI hospital CRM connects patient communication, scheduling, feedback, and follow-up workflows into a single platform powered by AI.

6. Robotic-Assisted Surgery and Emergency Triage

AI-powered surgical robots enhance precision, reducing recovery time and improving patient safety. In emergency departments, AI-driven triage systems assess patient symptoms and prioritise critical cases, ensuring faster medical attention. AI-enabled portable diagnostics bring accurate screening to remote and resource-limited settings using handheld devices (ultrasound, ECG) equipped with AI interpretation.

Can Generative AI Reduce Physician Burnout and Administrative Load?

The short answer is yes, and it is already happening. The longer answer involves understanding where clinician time actually goes.

Studies consistently show that physicians spend 1–2 hours on documentation for every hour of direct patient care. EHR “pajama time” (charting after hours) is a leading contributor to burnout. Generative AI addresses this directly through ambient clinical documentation: AI listens to the patient encounter (with consent), generates a structured SOAP note, and pushes it to the EHR for clinician review and sign-off.

Beyond documentation, AI reduces administrative load through automated prior authorisation workflows, AI-generated patient communication (appointment reminders, discharge summaries, educational content), and intelligent inbox management that triages patient portal messages by urgency and routes them to the appropriate team member.

The net effect: clinicians reclaim meaningful time for patient care, and administrative staff shift from manual execution to oversight and exception handling. This is not theoretical. Health systems deploying ambient AI documentation report measurable reductions in after-hours charting and improvements in clinician satisfaction scores.

What Are the Primary Risks of Using LLMs in a Clinical Setting?

Despite clear benefits, AI adoption in healthcare raises critical risks that must be managed proactively:

Hallucination. Large language models generate plausible-sounding but factually incorrect outputs. In a clinical setting, a hallucinated drug interaction or dosage recommendation could cause patient harm. Every LLM output that touches clinical decisions must be verified by a licensed professional.

Bias in training data. AI models trained on datasets that underrepresent certain demographics (race, age, sex, geography) will produce less accurate results for those populations. Bias auditing, diverse training data, and ongoing monitoring are essential.

Data privacy and security. AI systems ingest vast amounts of patient data. HIPAA compliance, data de-identification for model training, end-to-end encryption, and audit logging are non-negotiable. The vendor must sign a Business Associate Agreement (BAA).

Regulatory uncertainty. The FDA has cleared over 800 AI-enabled medical devices, but regulatory frameworks for generative AI in clinical settings are still evolving. Healthcare providers must stay current with FDA guidance, CMS policies, and state-level regulations.

Over-reliance and deskilling. If clinicians defer to AI without critical evaluation, diagnostic skills may atrophy. The human-in-the-loop model must be enforced by system design (AI suggests, clinician confirms) and reinforced through training.

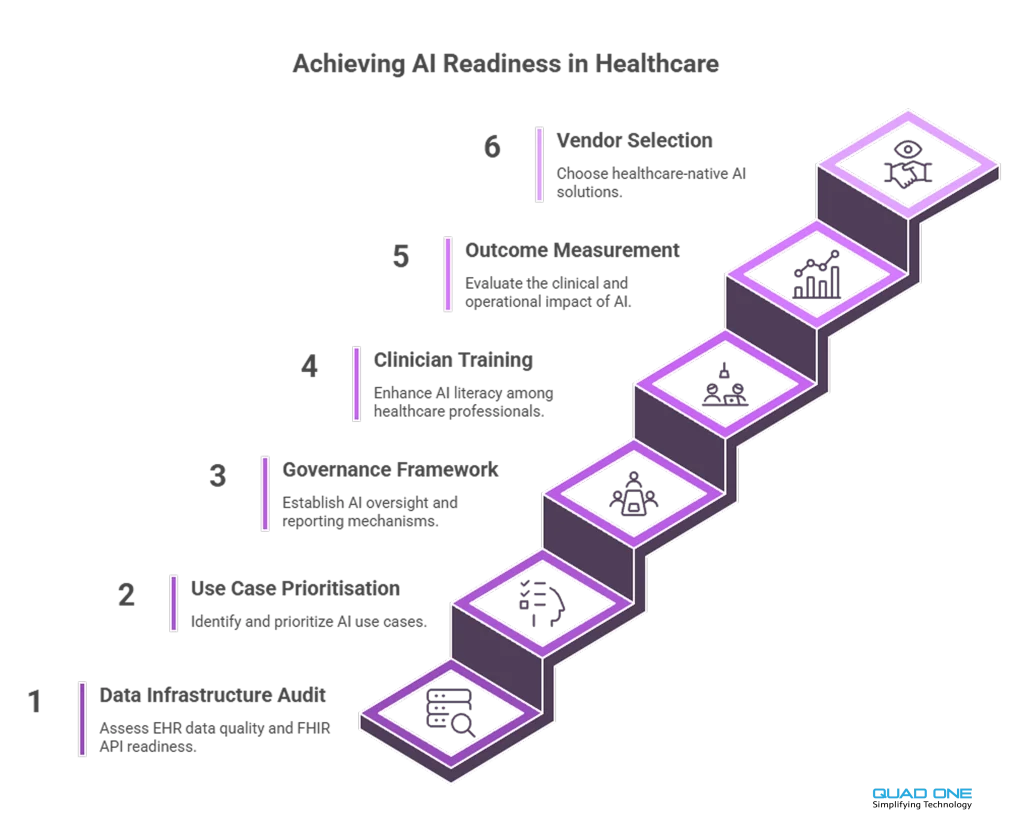

How Should Healthcare Providers Prepare for AI Implementation in 2026?

Responsibly adopting AI requires a structured approach. Here is a practical framework for healthcare leaders:

1. Audit your data infrastructure. AI models require clean, normalised, interoperable data. Assess your EHR data quality, FHIR API readiness, and data governance policies before procuring AI tools.

2. Start with high-impact, low-risk use cases. Clinical documentation automation and patient communication are proven, lower-risk entry points. Diagnostic AI requires more rigorous validation and regulatory compliance.

3. Establish a governance framework. Define who approves AI tool procurement, who monitors performance, how bias is audited, and how adverse events are reported. Assign clinical AI oversight to a cross-functional committee (IT, clinical leadership, compliance, patient safety).

4. Invest in clinician training. AI literacy is now a core competency. Clinicians need to understand how AI tools generate outputs, where they can fail, and how to critically evaluate AI-assisted recommendations.

5. Measure outcomes, not just adoption. Track clinical impact (diagnostic accuracy, documentation time saved, patient outcomes) alongside operational metrics (adoption rates, cost savings). Use A/B testing where possible.

6. Partner with healthcare-focused AI vendors. Solutions built for healthcare (not adapted from other industries) will have built-in compliance, clinical validation, and EHR interoperability. Explore Quad One’s AI in healthcare platform to see how purpose-built AI connects diagnostics, engagement, and operations.

Conclusion

Artificial intelligence for doctors transforms healthcare by offering improved diagnostic accuracy, personalised treatments, streamlined workflows, and enhanced patient engagement. The future of AI in healthcare holds tremendous potential, but realising it requires responsible adoption: clean data, clinical governance, human-in-the-loop design, and continuous outcome measurement.

As technology progresses, the hospitals and health systems that invest in AI infrastructure today will define the standard of care tomorrow. The question is no longer whether to adopt AI, but how to do it responsibly, measurably, and at the pace your organisation can absorb.

Book a demo with Quad One to see how our AI-powered healthcare platform connects diagnostics, patient engagement, clinical workflows, and hospital CRM in one system built for responsible AI adoption.